Computer Performance Scaling

Introduction

Nobody knows which computer architecture or chip company will dominate the industry in five years, but we know one thing for sure: scalability will play a key role in determining the “winner”.

A scalable computer architecture should:

- Have very high peak performance (best case performance scaling)

- Have very high “average” performance (true performance scaling)

- Be applicable in a large number of use cases (application scaling)

- Be efficient to operate (energy scaling)

- Be usable by a large number of novice programmers (developer scaling)

- Be robust at very fine process geometries (process scaling)

- Be easy to implement (complexity scaling)

This post reviews some common computer performance scaling techniques and gives examples of how they may be used to reach an arbitrarily large 1 PFLOPS (1015 FLOPS) peak performance.

Frequency Scaling

Scale up frequency to get more performance. To reach 1PFLOPS run the processor at 1 PHz.(warning: not practical!)

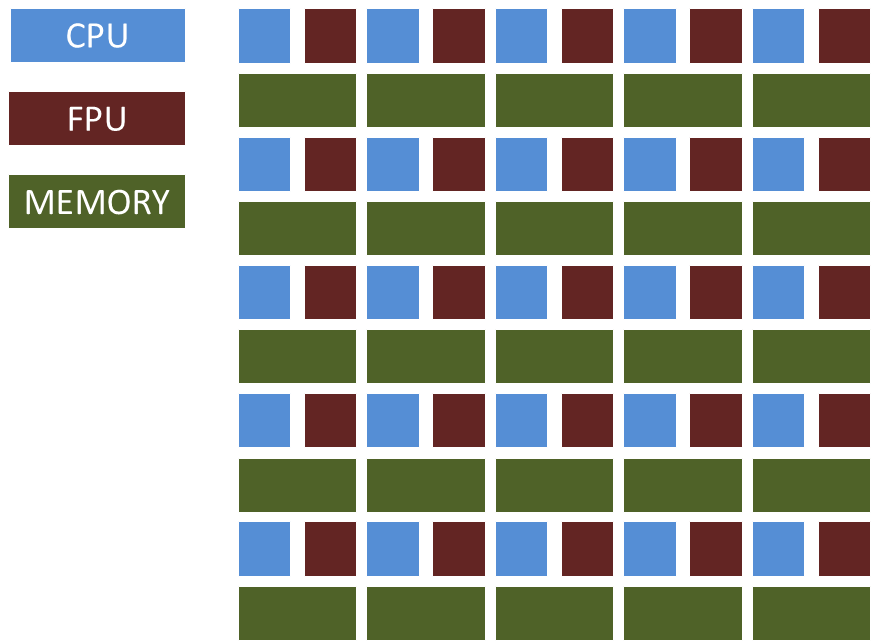

SIMD/Vector Scaling

Scale performance by using many floating point units working in lockstep under the control of a single CPU. To reach 1 PFLOPS, use a 1GHz CPU that controls one million compute units working in lock step on one instruction at a time.

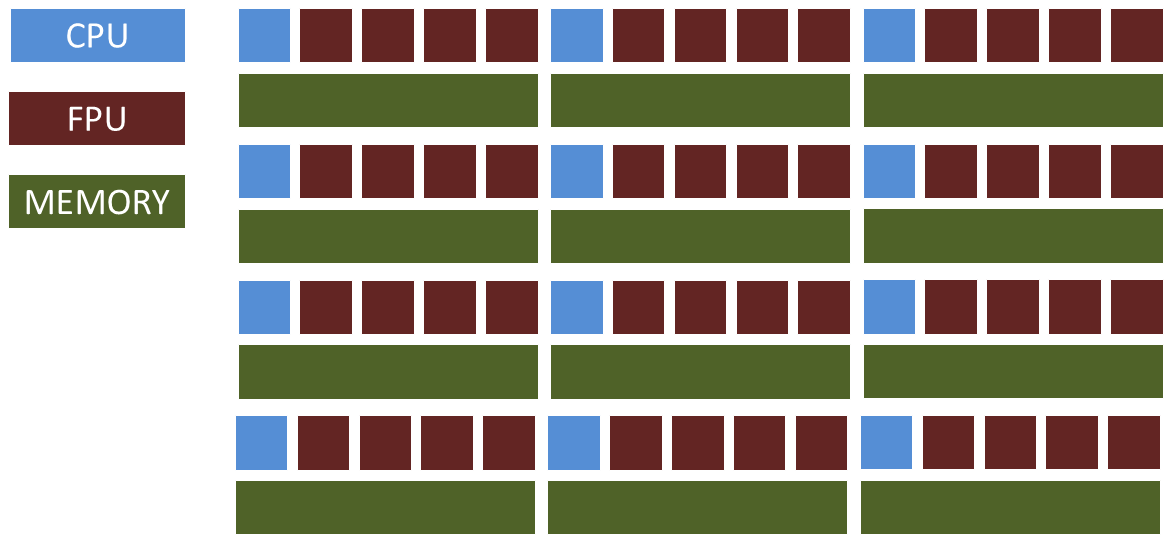

MIMD Scaling

Scale performance by using many independent general purpose CPUs working in parallel. To reach 1 PFLOPS, use one million 1GHz CPUs.

Heterogeneous Scaling

Scale performance by always using “the right tool” for the job. Every performance critical function could have its own accelerator logic.

“Goldilocks” Scaling

Use some combination of the techniques above to scale up performance. For example, to reach 1 PFLOPS, use 250,000 1GHz CPUs (with 4 compute units each).

Summary

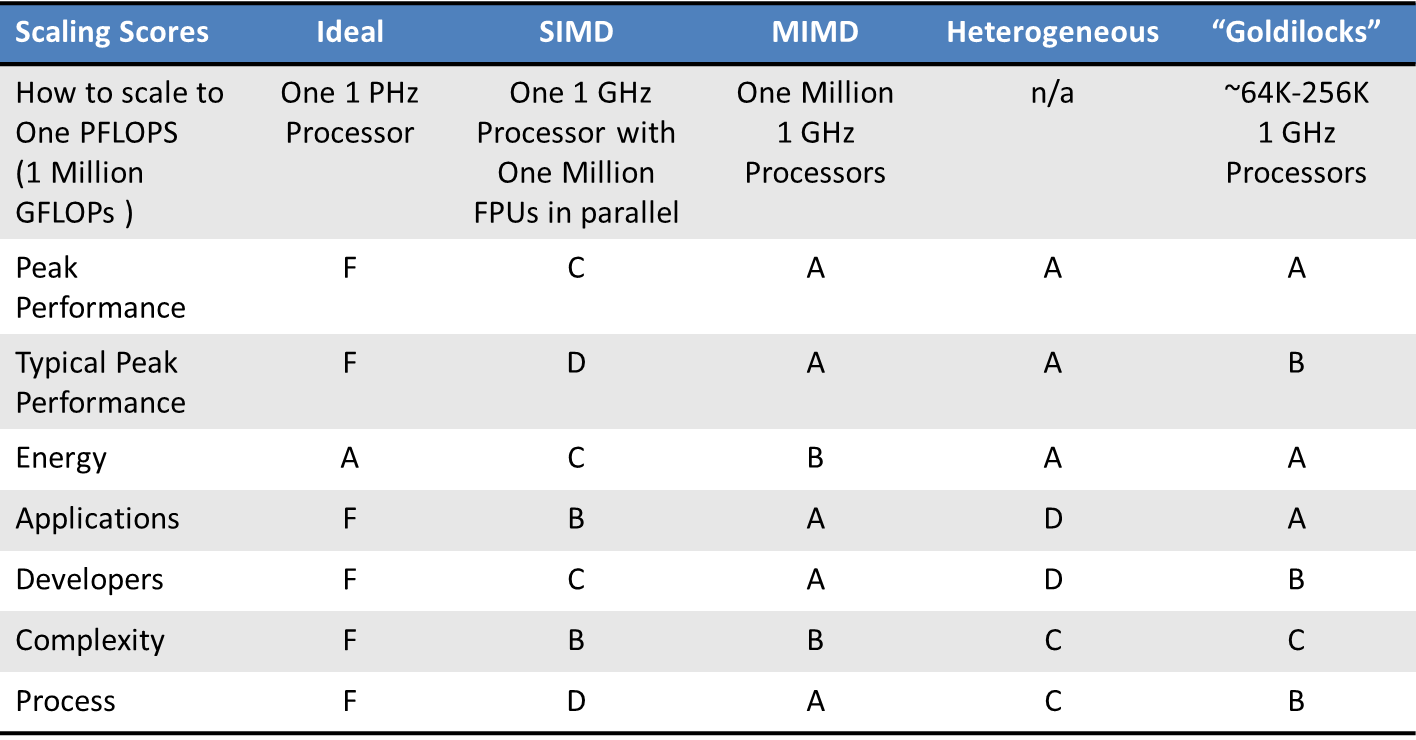

The following table summarizes the strengths and weaknesses of different scaling approaches. I admit that these grades are highly subjective, so please do write comments if you disagree with any of them.

Andreas Olofsson is the founder of Adapteva and the creator of the Epiphany architecture and Parallella Kickstarted open source computing project. Follow Andreas on Twitter.