Andreas Olofsson ·

Ten Processor Myths Debunked by the Epiphany-IV 64-Core Microprocessor

Introduction: The field of computer architecture is highly controversial with strong arguments on both sides of every major question: x86 vs. RISC, MIMD vs. SIMD, SMP vs heterogeneous,etc. This light-hearted article tries to debunk some of the “facts” that we have heard over and over again regarding our own design choices. Send me a note if you think the data is misleading or unconvincing.

MYTH #1: SIMD architectures are orders of magnitude more efficient than MIMD architectures.

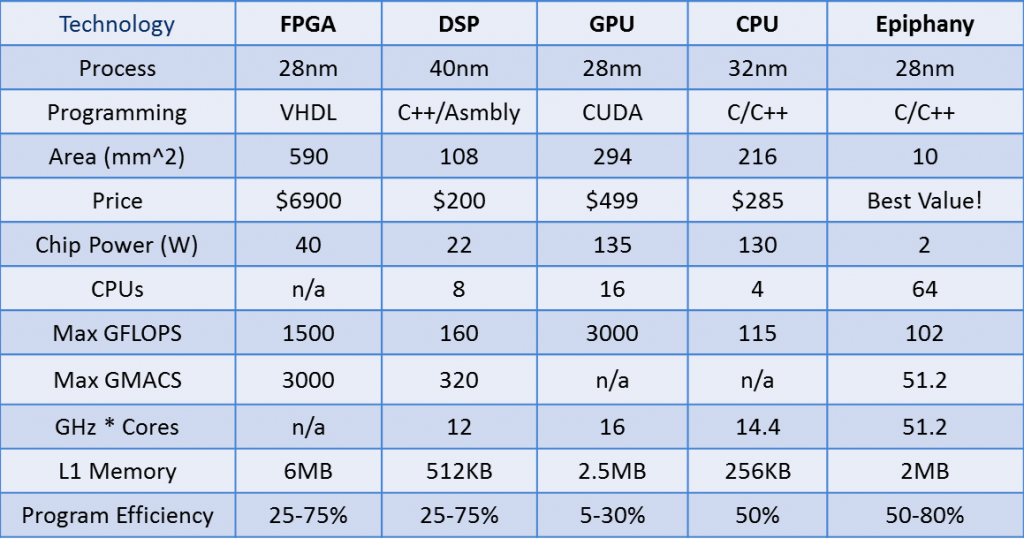

TRUTH: The overhead of program storage, instruction fetching, and instruction decoding is less than 30% in well designed MIMD architectures. This SIMD advantage is roughly equivalent to performance variations originating from process node variations, design team performance, and micro-architectural design choices. Table 1 below shows that the The Epiphany IV actually outperforms the leading edge SIMD based GPGPUs at the same technology node in terms of energy and silicon efficiency.

Table 1: Multicore Technology Comparison

MYTH #2: C/C++ programmable processors can’t compete with FPGAs in terms of cost and power density.

TRUTH: Adapteva’s latest Epiphany IV product clearly outperforms FPGAs in terms of theoretical floating point energy efficiency and has the significant advantage of being truly ANSI C/C++ programmable. Table 1 shows hard data for different high performance architectures regarding silicon area efficiency. Adding flexibility reduces silicon efficiency and as CMOS scaling slows down and eventually stalls silicon efficiency will become the dominant design metric.

MYTH #3: Floating point math should be avoided in embedded systems.

TRUTH: When all the power components of an embedded system are added up it becomes clear that the power and cost contribution of the floating point unit is negligible. For most markets the ease of use and time to market advantages of floating point far outweigh the few cents of extra cost added to the device. The figure below shows the layout of the 64 core 28nm Epiphany IV chip. The total chip area is 10mm^2, of with approximately 4mm^2 is dedicated to high speed local SRAM. The floating point unit only consumes 10% of the total chip area. (Note that in most processors, the floating point unit is less than 1% of the chip area)

Figure 1: Epiphany IV Chip Layout

MYTH #4: We won’t see 1000 core chips until 2017.

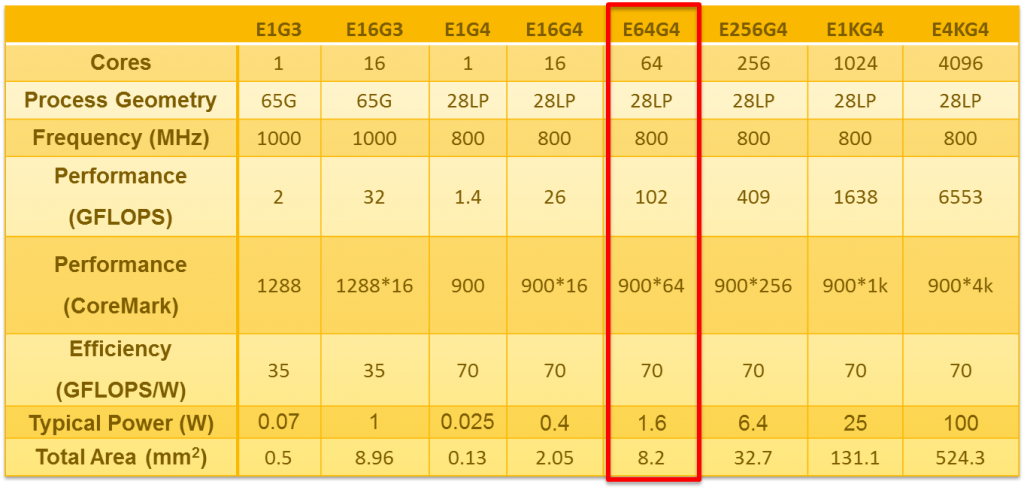

TRUTH: The latest Epiphany IV chip is only 10mm^2. A 1024 core Epiphany chip would occupy less than 140mm^2, far smaller than the current large GPUs and FPGAs (300-500mm^2) and Apple’s A5x mobile processor (169mm^2). We have already done layout exercises of such large arrays and are just waiting for the right opportunity to pull the trigger and create an actual product. Table 2 below shows the area for different Epiphany sizes. Now that Adapteva has clearly demonstrated the efficiency and feasibility of a 64-core Epiphany processor, a 1024 core should be considered practical as early as 2013.

Table 2: Epiphany IP Product Table

MYTH #5: We won’t see 50 GFLOPS/W until 2017.

TRUTH: Adapteva’s latest 28nm chip demonstrate 70 GFLOPS/W at the core-supply level and 50 GFLOPS/W at the chip level (with IO). A custom high performance system using low power DRAM and Epiphany processors that reaches 50 GFLOPS/W could be built as early as 2013.

MYTH #6: Processors must have built in hardware caching to be useful.

TRUTH: Adapteva’s chips do not include traditional L1/L2 hardware caching, opting instead to maximize the amount of available bits of data storage on chip. Scratchpad SRAMs have much higher density than L1/L2 caches, since they don’t include complicated tag matching and comparison circuits. For processors designed to run an operating system, hardware caching, memory protection, and memory management units are must haves. For processors designed to be accelerators to a host, maximizing the amount of local memory proves to be more important. This point has been illustrated by the performance metrics achieved by the Epiphany IV chip running synthetic benchmarks and real applications.

MYTH #7: Instruction set architectures must be large to support efficient compilers.

TRUTH: Today’s modern compilers like GCC 4.7 do most of the major optimization tricks at the front end and give very good out of the box performance without a large instruction set. Adapteva’s Epiphany instruction set is simple, but achieves excellent compiler performance on a large and varied set of benchmarks and kernel codes.

MYTH #8: Network On Chips must be complicated to be effective

TRUTH: Adapteva has designed its Network-On-Chip in the same spirit that it designed its Epiphany instruction set architecture: KISS. On chip networks should be simpler than macro networks due to the abundance of wires, low inherent latencies, and low bit error rates. Adapteva’s network is simple yet demonstrates high quality of service across a wide number of traffic patterns. We will soon publish a separate white paper detailing the performance of the Epiphany Network On Chip.

MYTH #9: Parallel architectures are difficult to use.

TRUTH: This really depends on the specific architecture, but it’s generally an oversimplification. Fine grained parallelism is difficult to grasp for the majority of programmers and has historically been confined to a group of expert high performance programmers. Coarse grained parallelism on the other hand has been used successfully for decades and we often use it without much thinking. Consider for example a Windows or Linux operating system. There are 10’s or 100’s of threads running simultaneously within the operating system without the programmer ever having to consider parallel programming. It’s all taken care of by the operating system. Adapteva supports fined grained as well as coarse-grained parallel programming, making it applicable to expert as well as novice programmers.

MYTH #10: It costs $100-400M to bring a new processor architecture to market.

TRUTH: Adapteva brought a new Instruction Set Architecture,Network On Chip infrastructure, four generations of processor chips, and a complete high quality tool chain that includes C/C++/OpenCL support for less than $2.5M. More information about how this monster myth can be found here.